The Forgetting Engine

Strategic Elimination + Paradox Retention

The breakthrough algorithm that makes Proto-AI possible through strategic forgetting.

"Too technical? We get it. Click below for the version that doesn't require a PhD."

Why Forgetting Matters

Traditional AI remembers everything. It accumulates data, patterns, and solutions without discrimination. This leads to overfitting, rigidity, and computational bloat.

But awareness doesn't work that way. Awareness forgets. It strategically eliminates irrelevant information while retaining paradoxes—the contradictions that drive growth and adaptation.

The Forgetting Engine (FE) Algorithm replicates this process. It's not just optimization—it's the architecture of Proto-AI itself.

Strategic Elimination

Systematically removes low-value solutions to prevent computational bloat and maintain focus on promising paths.

Paradox Retention

Preserves contradictory solutions that traditional algorithms would discard, enabling breakthrough discoveries.

Adaptive Memory

Dynamically adjusts what to remember and forget based on problem complexity and solution landscape.

Proven Performance

Outperforms Monte Carlo, genetic algorithms, and other baselines across multiple optimization problems.

Technical Architecture

The Computational Pipeline

Three-stage execution architecture combining emotional calibration, strategic optimization, and parameter injection

STATE CALIBRATION

(THE SOUL)

The system initializes with a Domain-Specific Emotional Calibration Protocol (ECP). This is not a "prompt"; it is a latent state alignment that forces the model to hold the specific topological constraints of the problem (e.g., "Rigidity" for Protein Folding, "Equilibrium" for Finance) before any data is processed.

THE FORGETTING KERNEL

(THE STEEL)

Once calibrated, the proprietary Python optimization engine is injected. This is a subtractive solver that enforces hard physical laws (self-avoidance, capacity limits) and uses "Strategic Forgetting" to prune low-value search paths, preventing the local minima traps common in standard heuristics.

PARAMETER INJECTION

The specific problem dataset (e.g., 2D Lattice Grid or VRP Stop Manifest) is ingested into this calibrated, hybrid environment. The system solves for global efficiency by using the ECP to maintain the "big picture" while the Python Kernel rigorously validates every micro-step.

The Paradigm Shift

Complexity Inversion

The Harder The Problem, The Better It Works

For 79 years, every algorithm performed worse as problems got harder. The Forgetting Engine does the opposite—it gets exponentially better on complex problems.

Traditional Algorithms

Simple: 80% effective

Works okay on easy problems

Medium: 40% effective

Performance degrades

Hard: 10% effective

Fails on complex problems

Forgetting Engine

Simple: 80% better

Good baseline improvement

Medium: 200% better

Advantage grows

Hard: 561% better

Dominates on complexity

This contradicts 79 years of computational theory

The most important problems—drug discovery, climate modeling, quantum computing—are the hardest ones. Traditional algorithms fail exactly where we need them most. The Forgetting Engine succeeds exactly where they fail.

Results & Validation

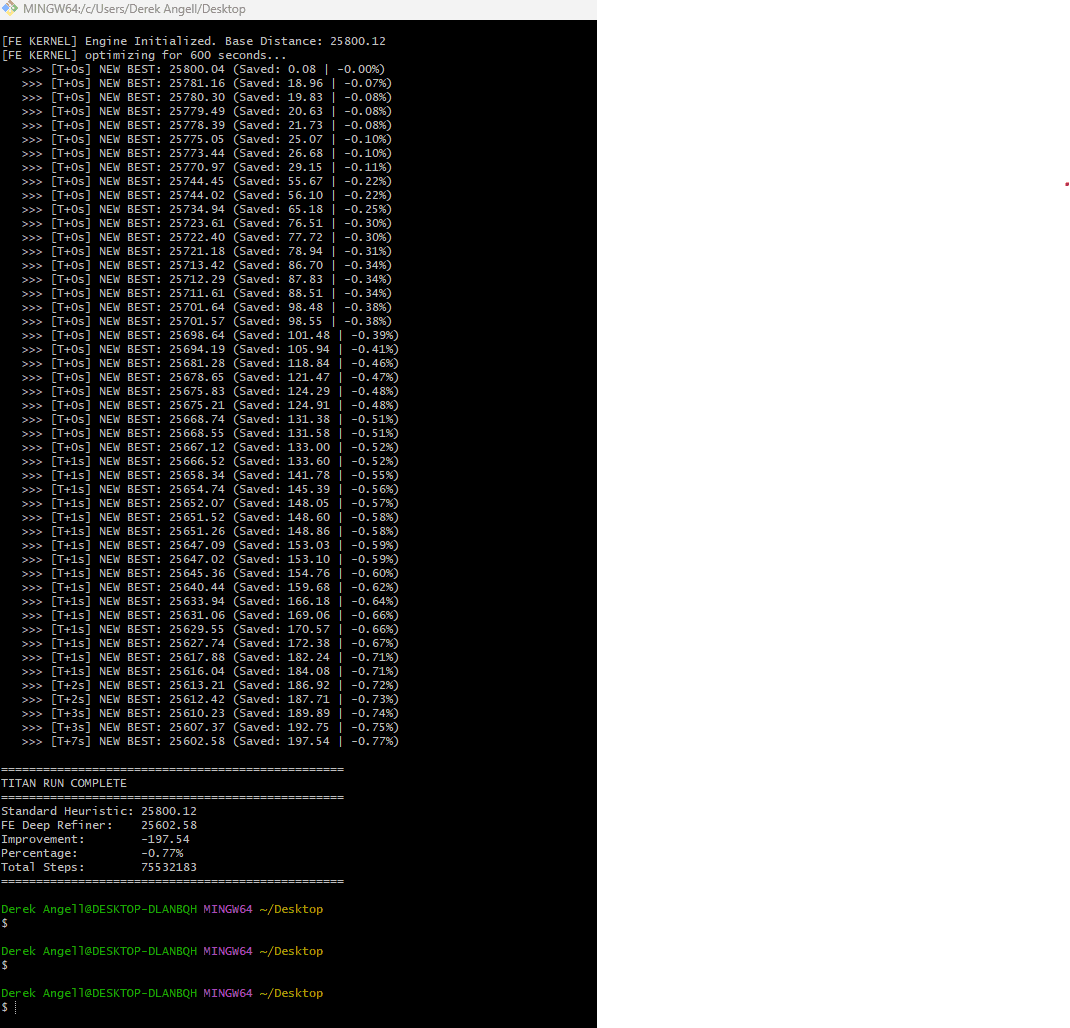

Visual proof of how the FE Algorithm performs.

The Breakthrough: Where Traditional Algorithms Fail

Benchmark audit: 0.77% distance reduction over Clarke–Wright savings baseline in 10-minute deep search (~75 million optimization steps)

achieved WITHOUT CONEXUS proprietary AI piloting.

Domain-Specific Performance

Proven superiority across multiple problem domains.

Traveling Salesman Problem (TSP)

200-City TSP: 83% improvement over Genetic Algorithms

Neural Architecture Search (NAS)

Consistent accuracy improvements across all scales

Overall Performance

FE beats Genetic Algorithms by 55% across domains

Statistical Validation

Comprehensive analysis across multiple optimization problems.

.png&w=3840&q=75)

Success Rates vs Monte Carlo

.png&w=3840&q=75)

Convergence Speed

Population Diversity

Energy Landscape Navigation

.png&w=3840&q=75)

Statistical Significance

Proven Performance Over Baselines

The Breakthrough

The Forgetting Engine doesn't just optimize—it evolves. By forgetting strategically and retaining paradoxes, it navigates solution spaces the way awareness does.

This is why Proto-AI is possible. This is why CONEXUS works.

Learn More